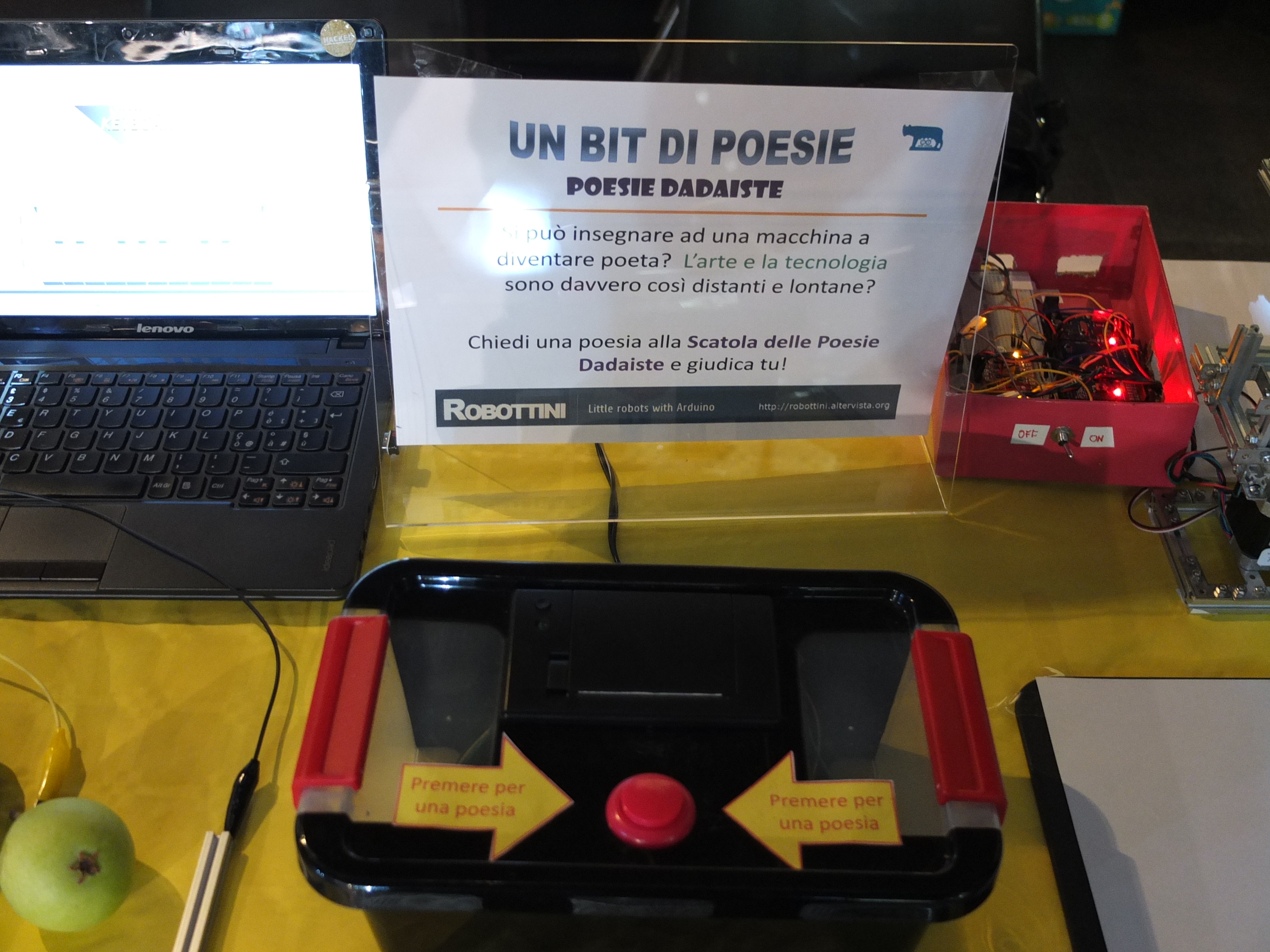

My second experiment (first is here ) to make Art o something similar with Arduino, is the Dadaist Poetry Box.

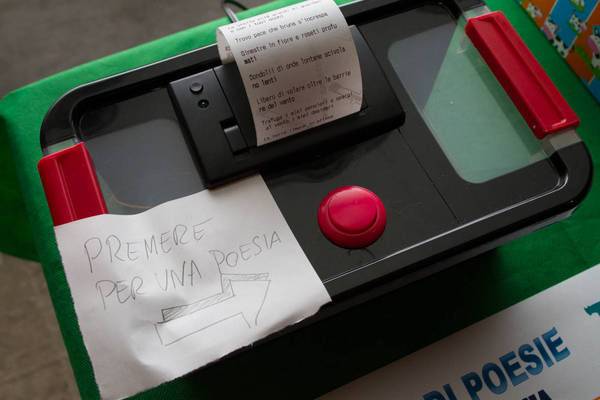

It’s made with an Arduino using a printer for receipts to write poem. The box composes the poems in autonomy, thanks to an algorithm that has been implemented on the Arduino. Push the button and here your dadaist poem. Each poem is different from the previous. It is original, new. Just press a button, and comes out a poem. Simple, immediate, trivial.

Normally, the poem is a valuable asset, is the result of an intimate moment, when the poet transposes on paper the emotions of his soul. It ‘an act inspired, an act of concentration and transport. It’s not immediate. The poem box instead is trivial, it seems almost anti-poem. But not, it’s Dadaist poem. In one of his writings, the famous poet Tristan Tzara, one of the major poets Dadaists, describes how to produce a Dadaist poem. The pass following explains their way to proceed:

- Take a newspaper.

- Take some scissors.

- Choose from this paper an article the length you want to make your poem.

- Cut out the article.

- Next carefully cut out each of the words that make up this article and put them all in a bag.

- Shake gently.

- Next take out each cutting one after the other.

- Copy conscientiously in the order in which they left the bag.

- The poem will resemble you.

- And there you are—an infinitely original author of charming sensibility, even though unappreciated by the vulgar herd.

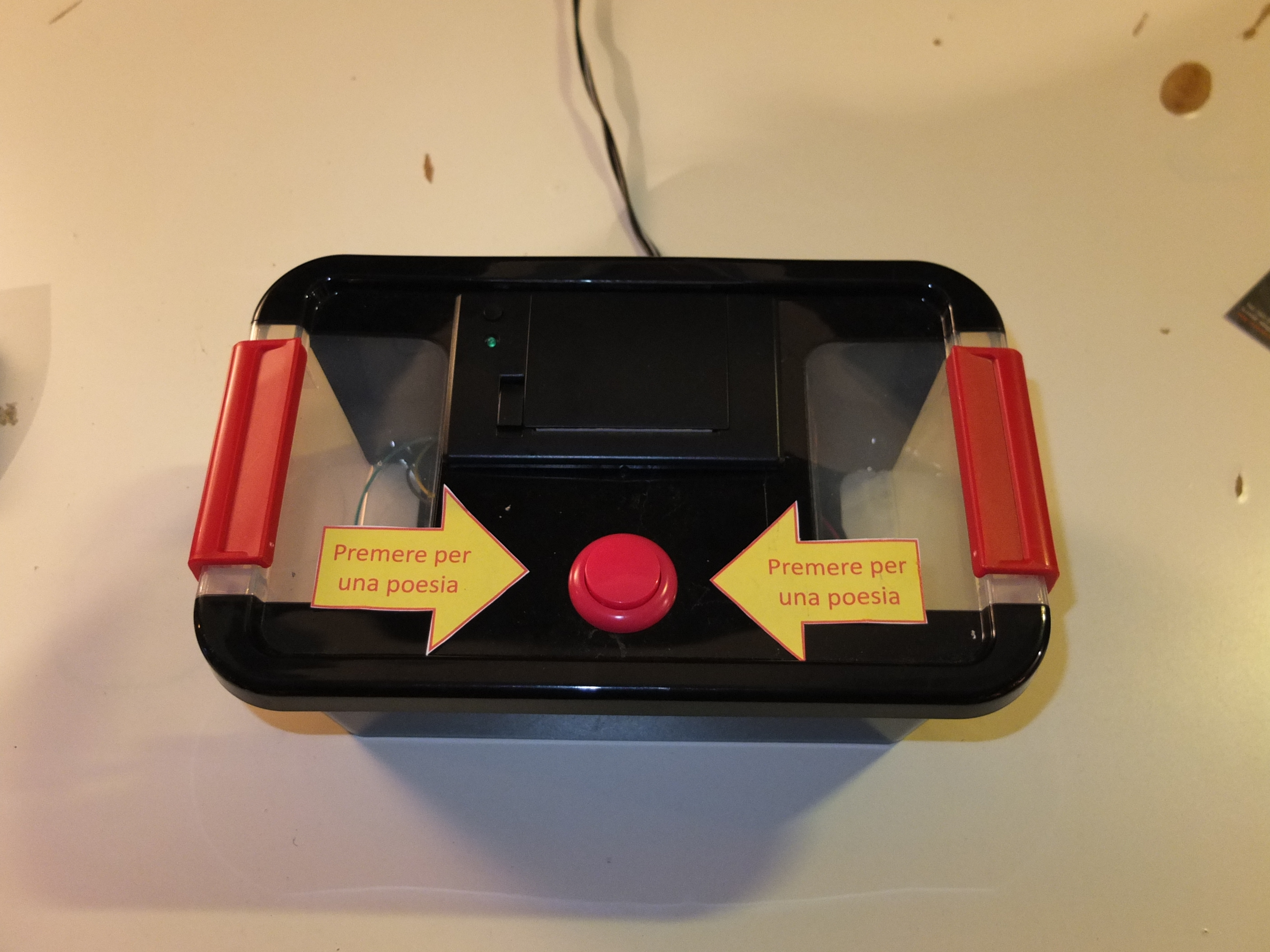

The Dadaist poetry box is based on Arduino Uno. These are the components:

– Arduino Uno

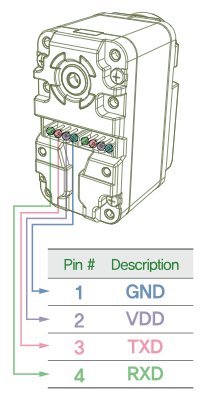

– Sparkfun thermal printer

– A big button

The program is based on pre-defined verses, that Arduino choices with an random alghoritm. The thermal printer is drove by the Arduino, using the SoftwareSerial library. The verses are stored in the program memory of Arduino (almost 7 kb).

The verses are in italian, so if you want the English language, you have to rewrite all the verses!

Some pictures:

This is a little video of the box:

This is the source code: Generatore_di_poesie_V4